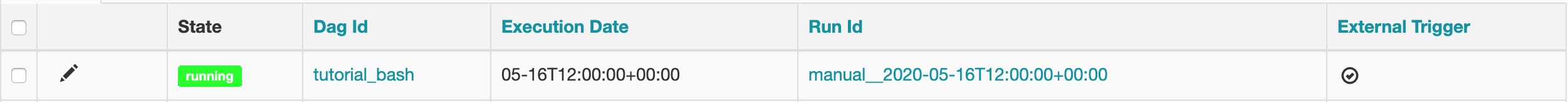

In fact, when I do "ls", I don't see anything. However, the terminal is not able to locate this file. Within the session manager's terminal as well. To view the results in sequential order, click on the Date column in the Status Details table. To view the results prior to 24 hours, select the required time interval. The default time interval is for 24 hours. By default, the report shows the pipeline results for all DAG Run ID. In organizations, Airflow is used to organize complex computing operations, create Data Processing Pipelines, and run ETL processes. Select or type in the DAG Run ID in the DAG Run ID filter variable. This there as well but I do not know how to actually install this. Apache Airflow is an Open-source process automation and scheduling application that allows you to programmatically author, schedule, and monitor workflows. One example of an Airflow deployment running on a distributed set of five nodes in a Kubernetes cluster is shown below. The worker pod then runs the task, reports the result, and terminates. Within my Airflow git repository, I also have a "requirements.txt" file. When a DAG submits a task, the KubernetesExecutor requests a worker pod from the Kubernetes API. However, when I open the Airflow UI, my DAG has an error that: No module named 'rapidjson'Īre there additional steps that I am missing out on? Do I need to import it into my Airflow code base in any other way as well? Now, I import the library in my dag's code simply like this: import rapidjson I also verified the installation using pip list. Once the terminal opened, I installed the library using pip install python-rapidjson Setting the environmental variable AIRFLOWLOGGINGLOGGINGLEVEL didnt help either. When running on my local machine, the logs are printed to the console after settings logging.basicConfig(levellogging.DEBUG).

boto3, the logs are not printed to the Airflow log. I connected to these 3 individually via Session Manager. When running code from an import module, e.g. Under the EC2 instances, I see three different instances for: scheduler, webserver, workers. Whenever I merge something into the master or test branch, the changes are automatically configured to reflect on the Airflow UI. I want to use the Python library rapidjson in my Airflow DAG.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed